Published on

Designing4Engagement: Design Thinking in the Program Quality Review Process

Program review is a systemic, ongoing process intended to impact educational quality within a program and also across an institution. This continual, reflective process, however, does not always transfer beyond the program review exercise, nor does it always involve its stakeholders in a meaningful, organic identification and implementation of program improvements and innovative initiatives. A real or perceived lack in transparency regarding quality assurance recommendations and outcomes can further complicate the value of the program review process.

Teaching at the postsecondary level for 18 years, I was seconded as a curriculum development specialist and program quality reviewer. I am also a creativity facilitator; thus, my new role became an exploration of design thinking and creative problem-solving strategies in increasing stakeholder participation and creating a more equitable, democratic and inclusive program review process. The ideas presented here were employed at two higher education institutions. They effectively transformed stakeholder attitudes while revealing limitations to a traditional “SWOT” (Strengths, Weaknesses, Opportunities, Threats) method. Undoubtedly, program reviewers bring their own techniques to the process. My training was based on what I perceived to be a static, non-inclusive and intimidating enquiry method leading to hesitant, redundant and all too familiar responses.

The observed technique resembled the Spanish inquisition, “sucking” energy out of the room as all stakeholders marched through familiar, pre-planned questions, one person, one question at a time. It occurred to me that this format negated listening and sacrificed any attempts at dialogue, depth, insightful problem solving and programming innovation.

Furthermore, stakeholders’ prior experiences set the stage for resistance, and in some cases, open hostility leading to “witch hunt” sessions or formal refusals to participate. These feelings stemmed from a lack of integrity and transparency regarding who selected, prioritized and generated the information contained within the final reports. Buy-in avoidance was further compounded by stakeholders not knowing what became of reports and not seeing program changes based on their recommendations.

Project Proposal:

I received internal funding for Designing4Engagement: Design Thinking in the Program Quality Review Process. Being an educator, creative thinker and facilitator, my objectives were to make the process engaging, informative and relevant for all. As a critically responsive educator, I wanted to design a more equitable, adaptive and inclusive framework for program review, curriculum design and institutional revitalization. In essence, I wanted to create a more meaningful experience by integrating the participatory and anti-oppressive tools of Augusto Boal’s Theatre of the Oppressed, as well as the inclusive, forward-focused, empathetic practices of Design Thinking and Creative Problem Solving and the learner-centered focus of Outcomes-Based Education and Wiggins and McTighe’s 2005 work Backward Design.

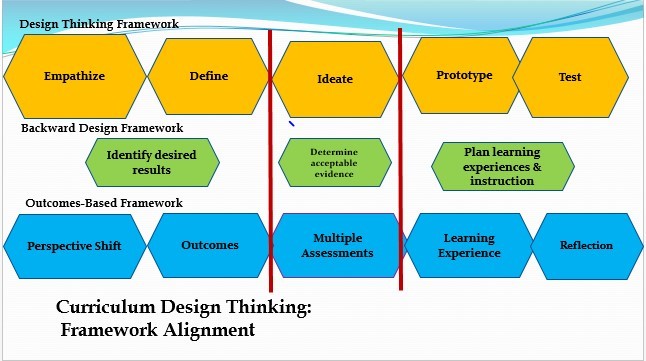

This may sound complicated, but my interdisciplinary mind saw alignment between these diverse areas and a way to empower stakeholders through an innovative, repeatable approach to curriculum revitalization (Image 1).

The overall aim of program quality is to review programs and improve deliverables for student success. Just like planning a dinner party for our friends, we need to understand all those involved beyond superficial appearances and needs. The interfacing of Design Thinking, Backward Design and Outcomes-Based Education frameworks invite institutions to understand the diverse needs of increasingly diverse stakeholders. This empathetic stance is one of the main principles of good design and is reflected in Ontario colleges’ adherence to vocational learning outcomes, program learning outcomes and course learning outcomes. It is a transformational approach to learning that is shifting focus from teaching to learning, content to process, skills to thinking. Few of us would design a dinner party without taking the unique needs and dynamics of our guests into account. Yet, my mentoring in program review did just that as stock questions produced stock answers that were hardly transformational.

I wanted to explore how might the program quality process be enhanced by aligning empathetic learning frameworks with the intuitive, positive and universal process of creative problem solving. After receiving a small research grant, I began investigating how the integration of these frameworks could:

1) Generate and nurture positive stakeholder participation in, and ownership of the developmentand implementation of actionable opportunities identified in the review process.

2) Envision more innovative and comprehensive actionable opportunities for curriculum, program and institutional renewal.

3) Provide faculty with professional development during the program review process in creative problem solving and design thinking for use within their unique contexts.

4) Increase stakeholder sessions’ effectiveness regarding purpose clarity, information validity, participant engagement, energy, awareness and transferability, as well as process transparency.

5) Create reproducible tools for an innovative, stakeholder-centered approach to the curation, management and implementation of program review findings, interpretations and subsequent actionable opportunities.

Methodology:

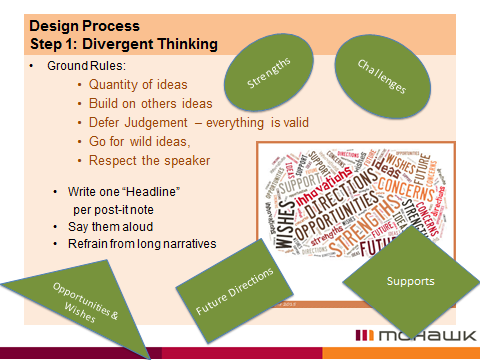

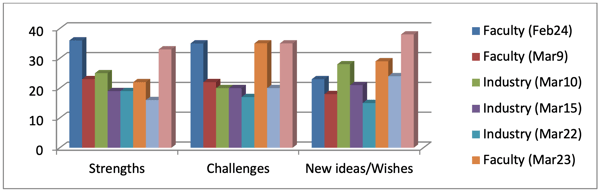

I implemented a mixed methods approach including pre and post surveys, my reflective journals, testimonials and quantitative analysis of stakeholder participation. Since reflective enquiry is integral to the iterative nature of design thinking and creative problem solving, I did self-evaluations and process journaling after each stakeholder session in order to make modifications to my technique and framework. Image 2 shows the final iterations of steps 1-3 of the process.

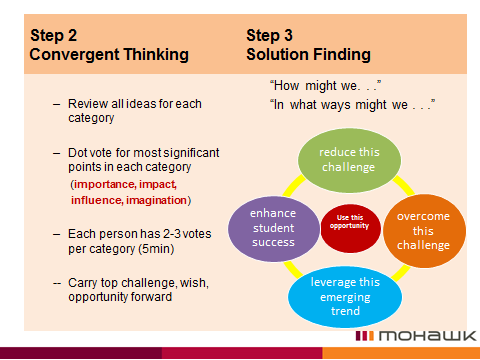

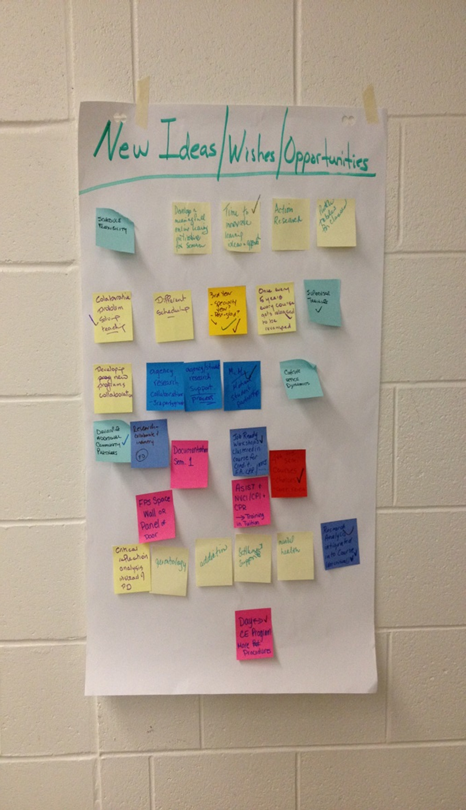

Outcomes were met and measured by the volume of Post-it Notes (Image 3) generated by participants during Step 1 (Fact Finding), Step 2 (Prioritizing) and Step 3 (Solution Finding).

While Step 1 is customary to the program review process through a customary SWOT analysis, Steps 2 and 3 proved valuable additions to the process with participants prioritizing the data and contributing to an energetic, inclusive, positive, solution-finding stage as shown in Images 3-5.

Project Outcomes:

“Not everyone can make decisions for the system they exist within” (Design Thinking for Educators, 2nd edition). Yet, this pilot came fairly close as the DT/CPS process increased and enhanced participation in and ownership of program change while providing a manageable framework for large stakeholder sessions. Whereas my initial training in program review included a handful of “specially selected” students from particularly good classes, it became common for me to involve entire classrooms, 40 students or more, in a stakeholder session.

In fact, this development arose when a particular program cohort wanted an audience with the program’s associate dean. The positive outcome of this massive session resulted in invitations for entire classes to be involved in learner-focused program commendations and recommendations.

Overall, the pilot fulfilled the following outcomes:

1) Creating new pedagogical and program review tools for the curation, management and implementation of program review findings that can be duplicated from year to year, program to program, institution to institution.

2) Increasing the identification, clarification and development of new thinking and innovative action items leading to program renewal (Image 6).

3) Enriching participation of and sense of ownership in the program review process by stakeholders, specifically in solution finding regarding challenges and new thinking.

The project also fulfilled additional outcomes such as generating relationships based on alliance rather than compliance between the program quality team, faculty, industry and student stakeholders. It also developed and enriched the institution’s partnerships with other organizations such as Creative Education Foundation (CEF), the Creative Problem Solving Institute (CPSI), the International Center for Studies in Creativity at SUNY Buffalo, and independent DT/CPS facilitators and researchers.

Project Challenges:

Challenges to the project stemmed from a variety of sources. But DT/CPS tools were used to mitigate those challenges. The major challenges stemmed from preconceived notions of program quality as compliance rather than alliance. The process was viewed as an irrelevant, work-producing exercise—not something that could enrich and support offering quality. These notions lead to reserved, skeptical and even hostile participation, and outright refusals to participate from certain departments. These pre-conceptions also resulted in poor participation in pre- and post-review surveys leading to unreliable data analysis. Finally, the equitable and democratic process for writing the final report through direct transfer of stakeholder prioritizes and action plans was never verified due to the end of the contract term and a lack of continuity in the role.

New Thinking Around the Challenges:

As in most situations, improved communication, clarity of purpose and transparency in the process helped shift perceptions from compliance to alliance. This transformation was further enhanced by creative problem solving and design thinking, deliberate empathetic perspective, democratic tools, positive language and facilitation style. Dialogic sessions with solution finding prompts such as “How might we” and “What are all the ways. . .”, in addition to the simple inclusion of the almighty Post-it Note, encouraged inclusion and a synergy of ideas. A pedagogy of inclusion and compassion was outlined at the beginning of a session establishing an environment of partnership and alliance in quality education and played a significant role shifting stakeholder tenor and attitude.

An extension to the first secondment built continuity, allies, trust and accountability. Knowing what happened to reviews the year before increased transparency. But as mentioned earlier, the second contract ended before the new, unbiased and equitable report-writing process could be tested. Overall, though, the pilot demonstrated an increase in equity and democratic practices within program review and became the point of interest to U.S., European and other Canadian institutions looking to rejuvenate their program review processes.

Ironically, when DT/CPS tools were used onfaculty and administration during Program of Study (POS) sessions, they were extremely satisfied with these sessions’ efficiency and effectiveness. In one instance, a program coordinator referred to a session as “magic” considering the amount of work accomplished in a mere two hours. Although the outcomes may have seemed to arrive through magic, DT/CPS tools lead to efficiency, depth and positive, solution-finding environments.

Thus, using the framework on faculty may be the best way to engage them in eventually using the framework with faculty in train-the-trainer sessions for their own application within their educational contexts.

Contributions to the Field:

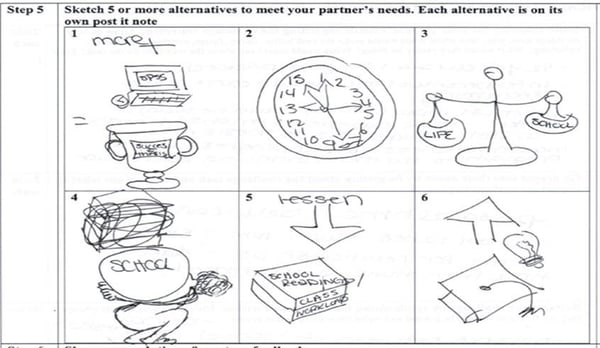

An interesting development in the experiment occurred with post-graduate students at a Canadian university. Unlike the college stakeholder sessions, this group struggled with identifying strengths, challenges, new thinking and future directions. They wanted the program to change, yet the data they were producing dealt with cursory alterations. We shifted our process to design thinking’s question framing, empathetic one-on-one interviewing, point of view statements and storyboard iterations. When invited to use visual representations of post-grad needs and concerns, their drawings showed an authentic and consistent story of struggling to balance school, work and life (Image 7) that had not been identified previously. Having been in their shoes decades before, I could empathize with their reluctance to admit this, especially since the majority of them were females. These drawings then empowered us to revisit the data previously collected and to understand it at a level that encouraged meaningful solutions.

Conclusion:

Designing thinking and creative problem solving is a proven, repeatable framework used in industry, elementary and secondary educational re-visioning. This pilot demonstrated its usefulness as a quality review process at the post-secondary level and as a way of building a positive culture based on alliances and equitable, inclusive principles between quality review teams, faculty, students and industry. Its alignment with Outcomes-based Education and Backward design further increased its suitability for professional development, curriculum development and program enhancements. This research also demonstrated a way to shift, if necessary, the perception of program quality as a make-work exercise of compliance to a more beneficial alliance perspective. This shift contributed to the achievement of the pilot’s outcomes as well as interesting revelations pertaining to diverse, arts-based ways of reflecting on and collecting information.

During my tenure as program quality reviewer, a great deal of positive work was done to build connections internally, externally, domestically and internationally in the application of DT/CPS tools and techniques in higher education. Quality ideas were generated and recorded in an equitable and democratic manner enriching program renewal. But far outweighing these outcomes was the building of alliances and quality partnerships based on equitable, democratic, empathetic relationships that continue to this day.

– – – –

References

Boal, A. (1985). Theatre of the oppressed. New York: Theatre Communications Group.

Design Thinking for Educators 2ndEdition. Retrieved from https://www.academia.edu/7856850/Design_Thinking_for_Educators_2nd_Edition

Wiggins, G. & McTighe, J. (2005). Understanding by design. Alexandria: ASCD.

Author Perspective: Educator